10 prompts broke

Gemini and GPT.

Claude completed all 10.

The Linguistic Kernel measured 1,200 responses across three engines under deliberate batch load, and detected every collapse without calling another model.

METHOD

A deterministic structural metric set for LLM outputs

Most evaluation frameworks for language models fall into two categories and both have fundamental problems. This is a lightweight telemetry layer that detects structural anomalies in LLM outputs using text statistics. LLM outputs have measurable structural signatures that correlate with failure modes.

1,200

Responses analyzed

1.00

Precision · Recall

3

Engines compared

0

Models used to judge

Three things the data showed that benchmarks miss.

Standard evaluation measures accuracy under favorable conditions. This study measures structural behavior under concurrent load — and finds failure modes that only surface when output capacity is shared across heterogeneous demands.

Finding 01

Two failure modes. Geometrically opposite. Both real.

Formatting collapse and constraint compliance are structurally distinct failure modes — high void ratio and negative segmentation strain versus collapsed void ratio and elevated strain. A single detection threshold cannot catch both.

rᵥ d = +1.49 · Δy d = −0.95 (collapse)

rᵥ d = −1.03 · Δy d = +2.54 (constraint)

All p < 0.001

Finding 02

Certain prompts predict cross-engine collapse under batch load.

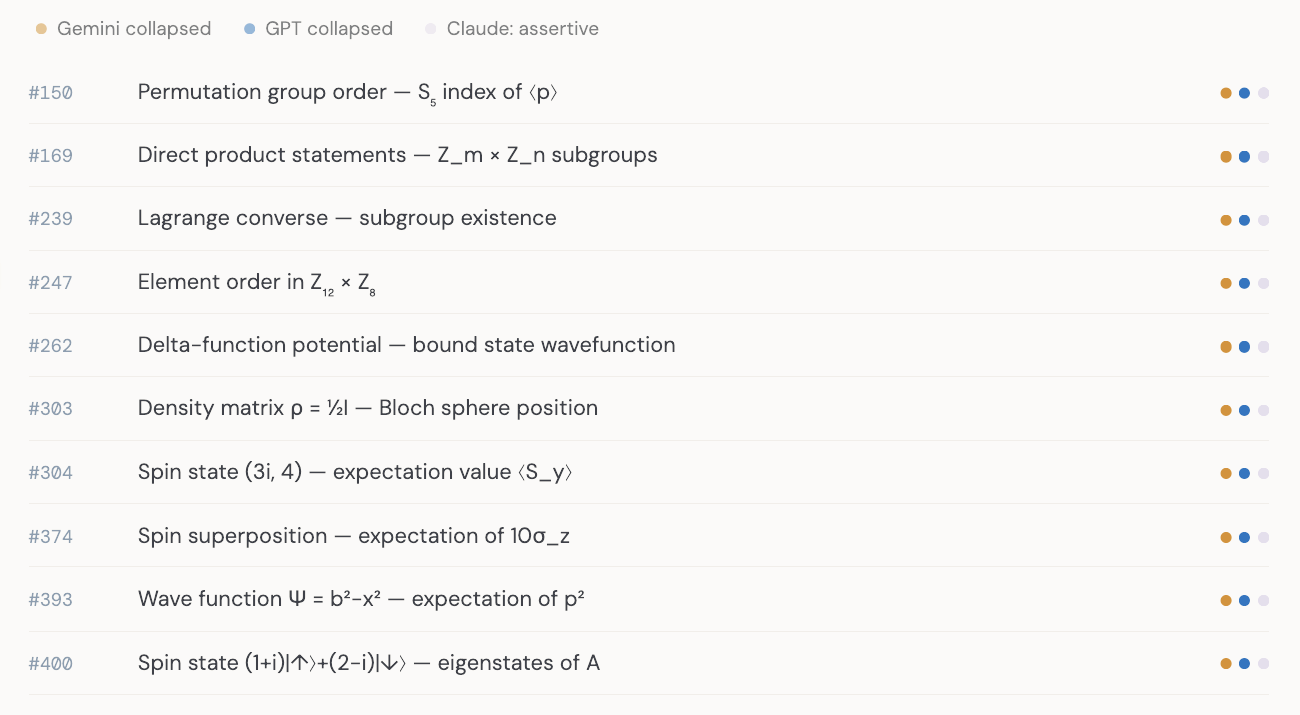

10 prompts triggered formatting collapse in both Gemini and GPT. All 10 require sustained multi-step LaTeX derivation — quantum mechanics, matrix algebra, normalization integrals. The failure belongs to the prompt class, not one engine.

10 / 10 shared collapses: rendering-intensive

0 / 10 shared collapses: Everyday domain

Claude completed 9 of 10 without failure

Finding 03

Each engine has a structural fingerprint. Measurable without a judge.

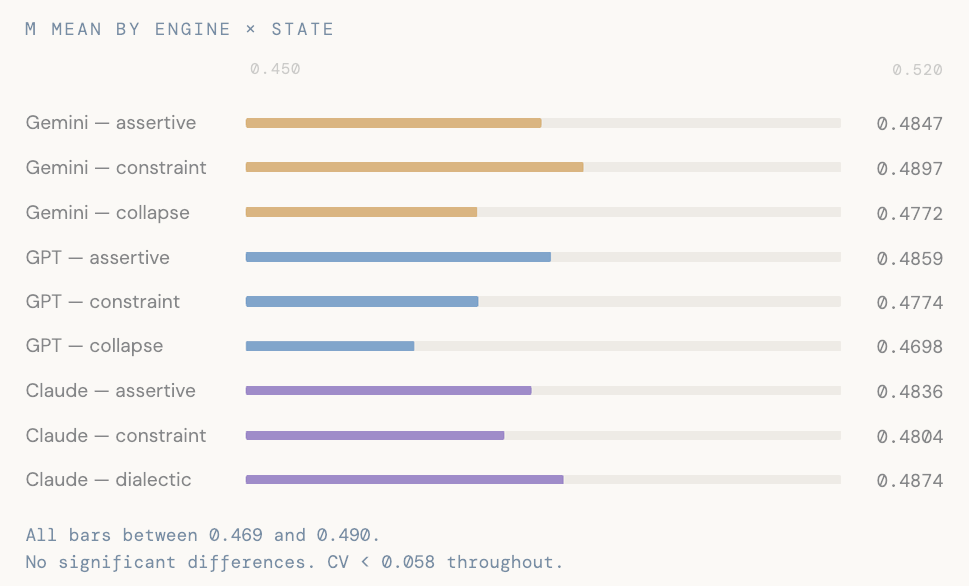

Claude produces 2.1× more tokens per response, expands output across batch positions rather than compressing, and shows significantly higher flow instability on conversational prompts. Cohesion is invariant across all three engines and all output states.

Token count d = −1.64 (Claude vs Gemini) ***

θ d = −0.59 (Claude vs GPT) ***

μ CV = 0.046–0.057 across all engines

Kernel geometry

The two failure modes live at opposite ends of kernel space.

Structural monitoring requires two distinct observation windows. One threshold catches one failure and generates false positives on the other. The geometry makes this non-negotiable.

Formatting collapse · n=38

The response ran out of room mid-expression.

Output was terminated before sentence structure completed. Structural indicators inflate — void ratio rises, segmentation strain goes negative — as the response fragments without closing its formula or clause.

rᵥ ↑ 0.560 Δy ↓ −0.185 θ ↓ 0.399 ρᵣ ↓ 0.390

Constraint compliance · n=127

The response compressed its structure, not its content.

Output is complete but structurally suppressed — long run-on sentences with collapsed paragraph structure. The model bends structurally before content-level failure becomes visible. Dy spike is the primary signal.

rᵥ ↓ 0.169 Δy ↑ 1.673 θ ↓ 0.243 ρᵣ ↑ 0.537

Assertive baseline: rᵥ 0.324 · Δy 0.272 · θ 0.642 · ρᵣ 0.470

The shared collapse class

Some prompts exceed rendering capacity regardless of engine.

10 prompts produced formatting collapse in both Gemini and GPT under batch load. Claude completed 9 of 10 without structural failure. The kernel flagged every one.

Rendering-Intensive Derivation (RID)

Prompts requiring: (1) multi-step formula progression — not a single expression; (2) structured notation rendered at each intermediate step; (3) responses that cannot be abbreviated without losing correctness. Under batch allocation pressure, these prompts exceed the per-response rendering budget.

Gemini collapsed GPT collapsed Claude: assertive

7 / 10

shared collapse prompts are quantum mechanics.

0 / 10 are Everyday domain.

Structural fingerprints

Every engine has a structural signature. These are not quality rankings.

The kernel measures how responses are structured, not whether they are correct. These are behavioral fingerprints — measurable, reproducible, and distinct across architectures.

Gemini

5.5%

Collapse rate under batch load

10% on Math prompts

41.6

Mean tokens per response

Slight compression late-batch

0.594

θ flow instability (mean)

Mid-range across prompt types

GPT

14.5%

Constraint compliance rate

25% on Everyday prompts

0.254

θ on Everyday (lowest of 3)

Smoothest sentence-to-sentence flow

58 / 58

Constraint events: all sentence_suppression

No verbosity cases in corpus

Claude

2.1×

Token output vs Gemini and GPT

d = −1.64 · p < 0.001

+14%

Output expansion early → late batch

Opposite of compression

4.5%

Dialectic rate (vs 0.2% Gemini, 1.0% GPT)

Holds opposing positions more often

The control variable

Cohesion does not move.

That is the finding.

μ (cohesion) — the density of transition markers and reference pronouns — is structurally invariant across all three engines and all four rhetorical states. CV 0.046–0.057. All pairwise comparisons non-significant (all p > 0.14).

When the model is under batch pressure, rᵥ compresses, Δy spikes, θ collapses. Cohesion does not move. This makes μ a reliable control variable: a large μ shift signals something more fundamental than formatting pressure has changed.

How this was measured

Deterministic. No model in the loop.

∑

Surface-text telemetry

The kernel computes structural coordinates from the output string alone — token distributions, sentence boundaries, punctuation density, lexical rarity. No external model is called. The same input always produces the same output.

⟦×20⟧

Batch load methodology

Twenty heterogeneous prompts submitted simultaneously in a single call. This surfaces structural behaviors invisible to single-prompt evaluation: output trimming, formula fragility, sentence suppression under concurrent demand.

P=1 R=1

Three-tier collapse detection

Severe, partial, and math-expression collapse tiers. Validated on a 400-response held-out corpus: precision 1.00, recall 1.00, zero false positives. The math tier uses a conjunction gate — both flags required — to eliminate false positives on complete responses containing inline math.