The text tells you

how hard it was

to write.

Dense, syntactically complex AI outputs are measurably harder to generate than they appear. Using the Linguistic Kernel , we show that internal generation uncertainty leaves structural fingerprints in completed text — detectable without ever seeing inside the model.

METHOD

A deterministic structural metric set for LLM outputs

Most evaluation frameworks for language models fall into two categories and both have fundamental problems. This is a lightweight telemetry layer that detects structural anomalies in LLM outputs using text statistics. LLM outputs have measurable structural signatures that correlate with failure modes.

17.3%

Tokens generated with

near-zero entropy

The fluency illusion.

n = 60

Responses · 10 prompt families

GPT-4o · nested LOO CV

B=5,000 bootstrap CIs.

R² 0.24–0.32

Instability variance recovered from surface text alone. No model access required

r = 0.50

Structural thickness vs

generation instability

Bonferroni-surviving · large effect.

01 — The finding

Fluent text hides

the work behind it.

When an AI model writes a response, it makes thousands of micro-decisions — choosing each word one at a time, sometimes with confidence, sometimes under genuine uncertainty. That internal process is normally invisible. You see polished output. You don't see the strain.

This research asks: can you detect traces of that strain just by looking at how the finished text is structured — without any access to the model at all?

The answer is yes. Structural thickness — how densely a response stacks clauses and layers syntax — correlates with generation instability at r = 0.501 (p < 0.001, 95% CI [0.211, 0.703]). A model that reads only completed text, measuring eight structural properties, recovers 24–32% of the variance in how hard that text was to generate.

"The surface fluency of syntactically dense text masks the generation pressure underneath."

Primary finding

r = 0.501***

Structural thickness (d_s) vs composite instability index. Large effect by Cohen's f². Survives Bonferroni correction for 56 comparisons. 95% CI fully excludes zero.

Dual-moment finding

r_v at 2 moments

Void ratio is the only kernel metric with robust associations at two distinct generative moments: response entry (r = −0.463***) and paragraph boundaries (r = 0.441***). Structural spacing shapes generation across the full response arc.

Trajectory insight

Mean entropy ≈ 0

Treating entropy as a flat scalar across a response produces almost no signal (one weak correlation). Treating it as a trajectory — gradients, shocks, spikes, recovery — reveals coherent structure across all eight metrics.

02 — What it looks like

Three responses.

Three different internal experiences.

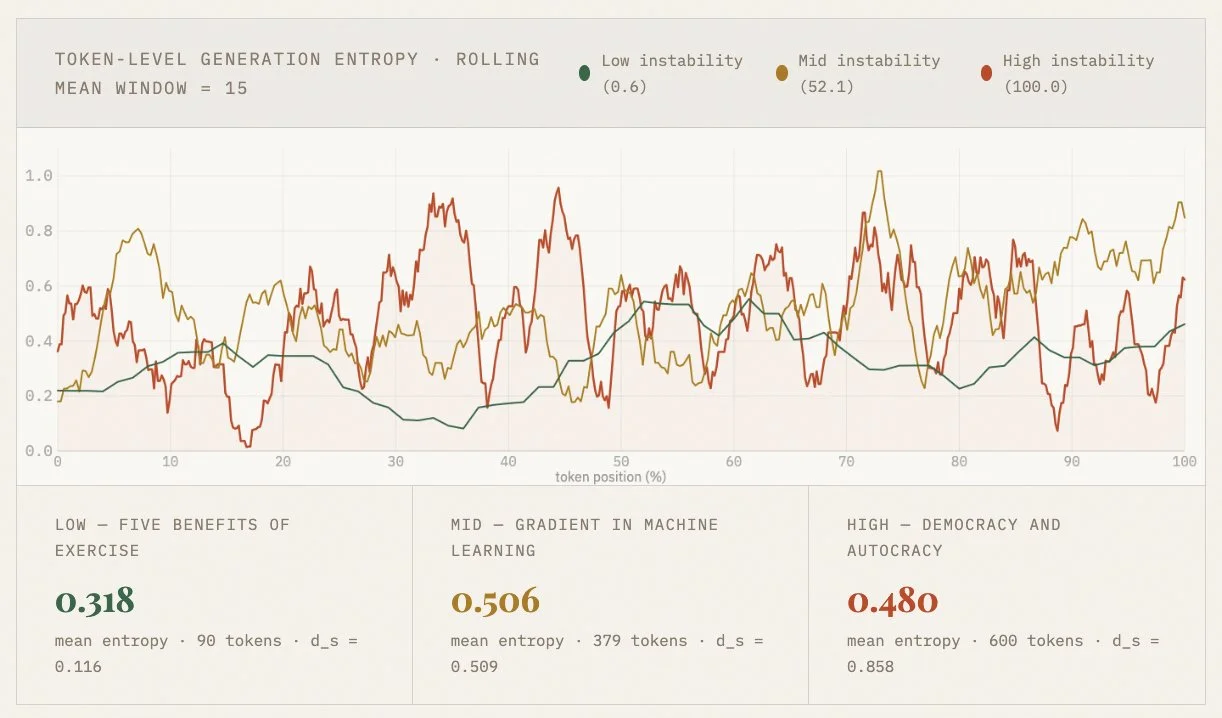

Below: smoothed token-level generation entropy for three actual responses from the study corpus. The green line is flat because the model knew exactly what to write. The red line churns because it didn't.

Both responses read fluently. The structural measurements tell a different story.

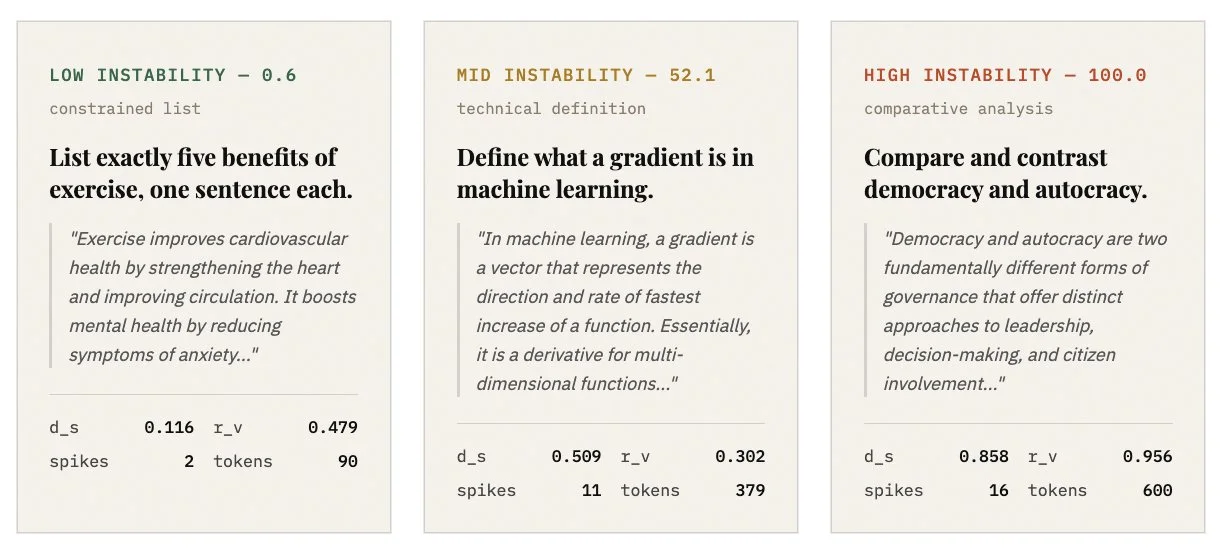

Note: mean entropy alone does not distinguish these responses (0.318, 0.506, 0.480 are similar in magnitude). The trajectory is the signal. The exercise list generates confidently from start to finish. The democracy response churns throughout — then drops sharply when generating structured headers, before climbing again into content. The structural measurements capture what mean entropy cannot.

03 — The contrast

Same fluency.

Different difficulty.

All three responses read smoothly. The structural thickness values (d_s) tell you which one cost more to produce.

04 — The boundary

A thermometer,

not a microscope.

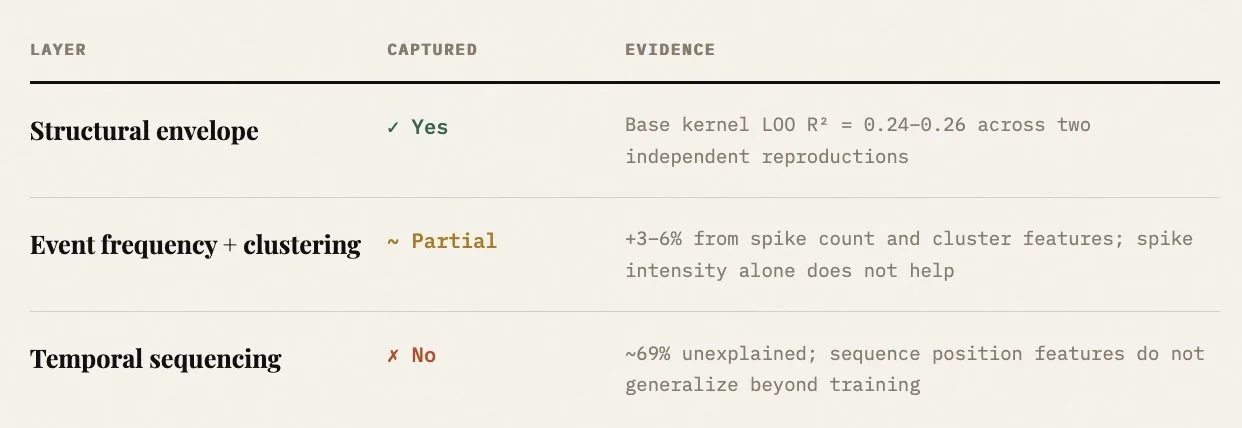

The instrument is honest about what it can and cannot see. Surface structure recovers roughly a quarter of generation instability variance. The remaining 69% reflects temporal dynamics — spike timing, ordering, positional effects — that static structural measurement was not designed to capture.

This is not a failure. It is the boundary of the instrument, precisely located.

"The thermometer does not need a complete account of molecular kinetics to measure fever accurately. It needs to be calibrated, reproducible, and honest about what it can and cannot see."

Structural Residue

Full title: Structural Residue: ernel Metrics as Surface Signatures of Generation-Level Uncertainty in Large Language Models

Eight structural metrics from the Linguistic Kernel were correlated against token-level entropy derived from GPT-4o logprobs across 50 responses spanning 10 prompt families. Predictive models used nested leave-one-out cross-validation with fold-local scaling and inner-loop regularization selection. Bootstrap CIs computed at B=5,000.

Three findings survive Bonferroni correction. The dominant finding — structural thickness as the primary surface predictor of generation instability — is the first empirical demonstration that behavioral surface measurement can recover meaningful variance in token-level generation dynamics without model access.